Navigating the Future: The Evolution of Spatial Computing

A deep dive into the technologies driving spatial computing and their real-world applications

Introduction

Spatial computing integrates the digital layer with the physical world, enabling users to interact with digital information in their environment. The technology supports various modes of interaction, such as visual, auditory, and haptic feedback, and is designed to be unobtrusive. It aims to blur the lines between digital and physical spaces, enhancing everyday tasks by providing real-time information and interactions. This approach aligns with the concept of ubiquitous computing, where technology seamlessly integrates into daily activities.

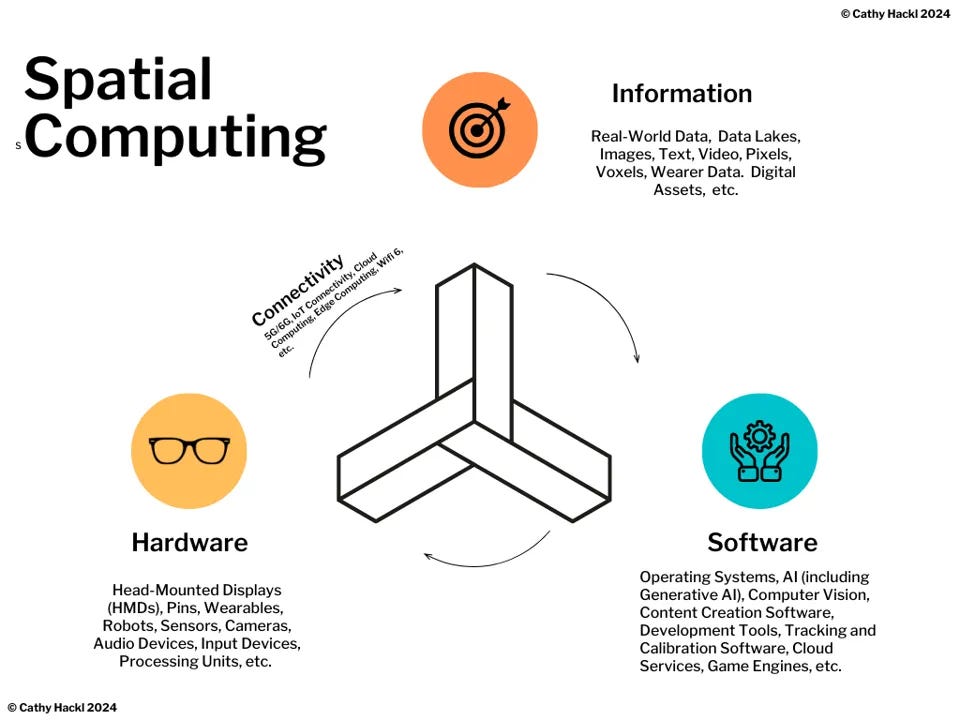

Figure 1: Spatial Computing is an evolving 3D-centric form of computing that, at its core, uses AI, Computer Vision and extended reality to blend virtual experiences into the physical world making almost every surface a spatial interface. It combines software, hardware, data/information, and connectivity [1]

Spatial computing relies on three key elements: 3D data, location, and time. 3D data enriches content by adding spatial depth, although this requires substantial computing power and storage. Location precision is vital for accurately overlaying digital information onto the physical world, as seen in industrial digital twins where millimeter accuracy is crucial. Time allows for tracking changes, analyzing historical trends, and forecasting future scenarios, making it essential for dynamic interactions within spatial computing environments. [2]

The U.S. spatial computing market size was estimated at USD 27.59 billion in 2023 and is predicted to be worth around USD 244.40 billion by 2034, at a CAGR of 21.9% from 2024 to 2034. [3]

Challenges in Spatial Computing Development and Adoption

Spatial Computing Strategy of the Hyper Scalers

Spatial Computing Technology Stack

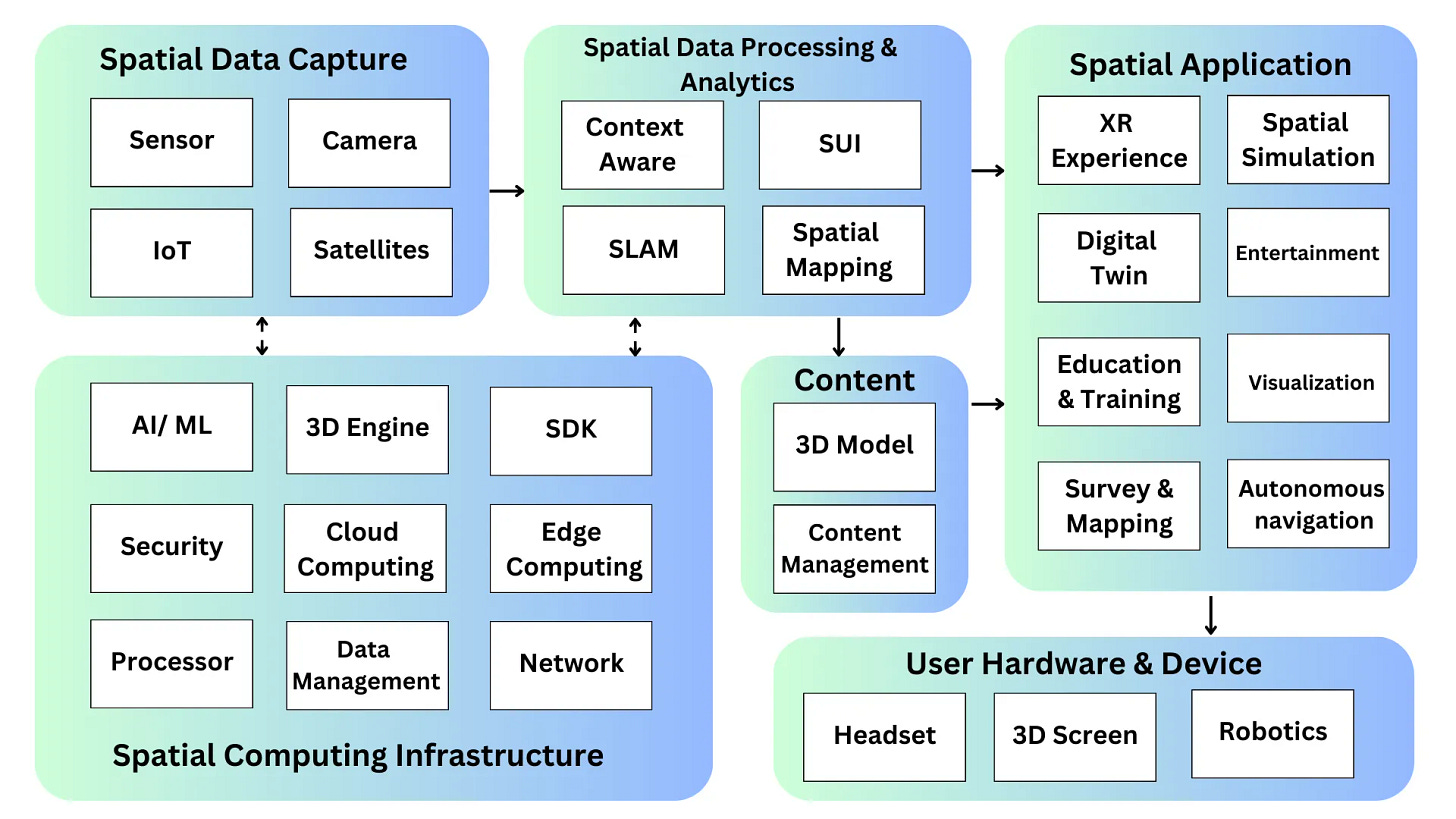

The spatial computing tech stack integrates general and specific components essential for immersive digital interactions. General components include foundational hardware like processors and standard networking, security, and cloud computing services that support data management and connectivity. Spatial-specific components encompass sensors, cameras, and IoT devices for data capture; software tools and SDKs tailored for spatial data processing and 3D visualization; and user-oriented hardware such as headsets.

Figure 2: Spatial Computing Tech Stack by Clarice Qiu

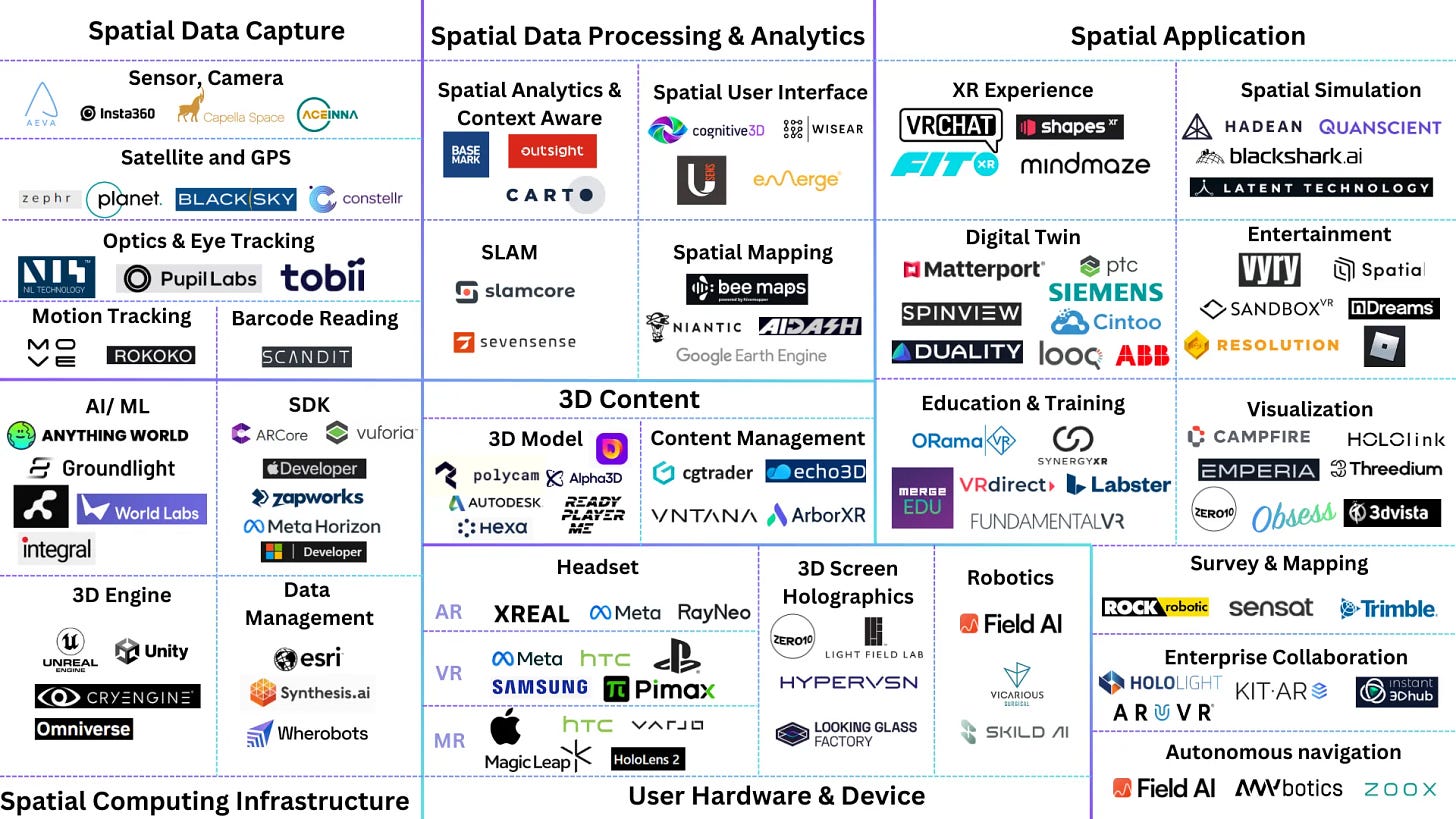

Figure 3: Spatial Computing Market Mapping by Clarice Qiu

Spatial Data Capture: Sensor Networks, IoT, and Data Fusion

Spatial Data Processing, Analytics and Generation

Spatial Computing Infrastructure

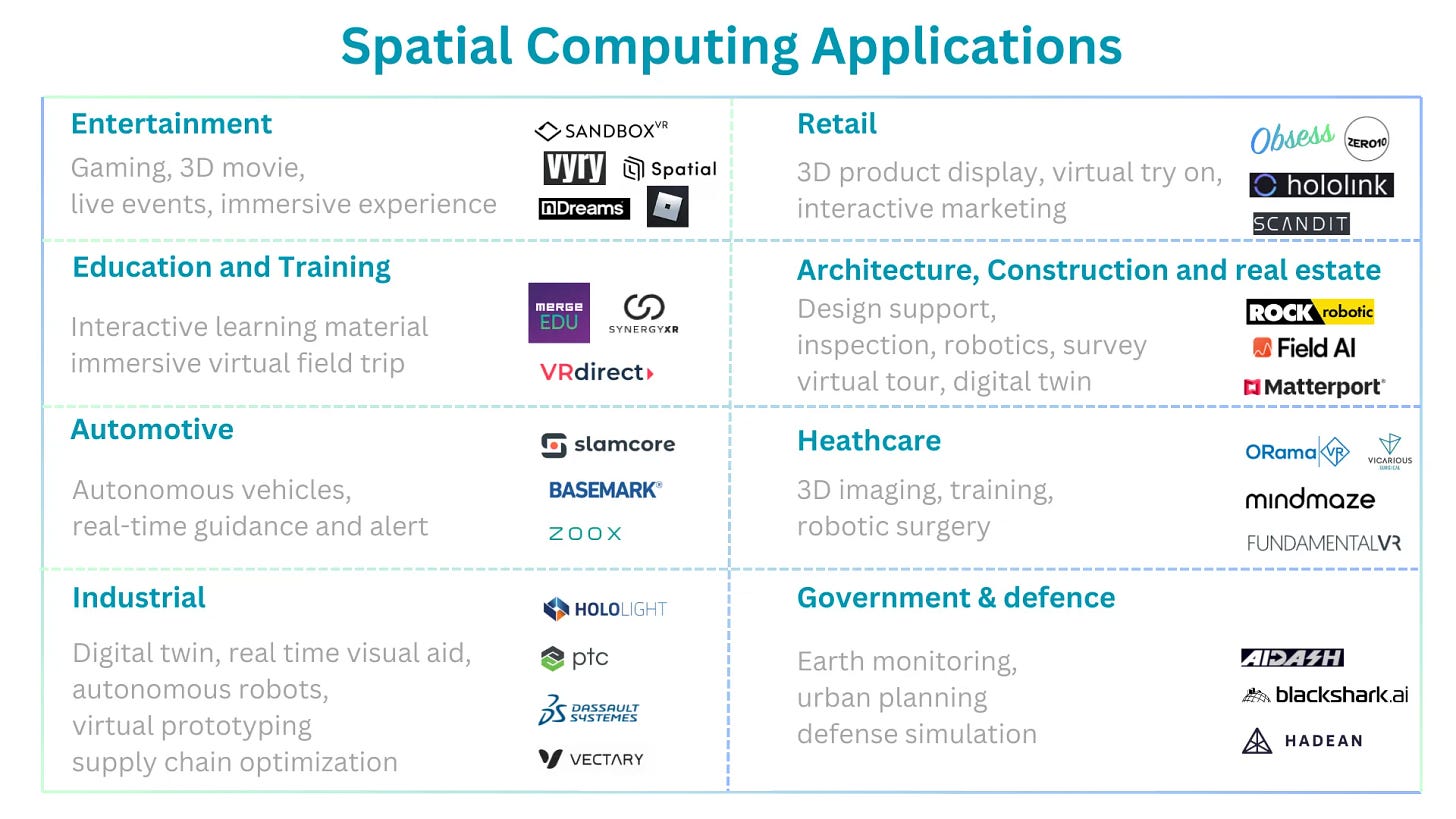

Spatial Computing Applications

Figure 9: Key spatial computing applications by Clarice Qiu

The Future of Spatial Computing

Integration with AI and Machine Learning: Enhanced AI models will profoundly influence spatial computing by improving the accuracy and efficiency of spatial data analysis. This will lead to innovations in navigation systems, dynamic environmental monitoring, and personalized augmented reality (AR) applications, as well as more sophisticated robots that interact with their environments in intuitive ways.

Advanced IoT Integration: The proliferation of IoT devices will enrich spatial data inputs, significantly impacting urban planning, logistics, industrial, and infrastructure management. These devices will facilitate the seamless integration of real-time spatial data across networks, enhancing operational efficiencies and enabling smarter city solutions.

Enhanced Real-Time Data Processing: Future spatial computing will benefit from advancements in computing power and algorithm optimization, allowing for the real-time processing of complex spatial data. This capability is crucial for applications requiring immediate responses, such as autonomous vehicles and emergency response systems.

Ubiquitous AR/VR Including Mobile AR: AR and VR technologies will become common in daily activities and across various industries, incorporating headset-free XR to make digital interactions more accessible and immersive. This trend will see XR becoming more integrated into professional tools, educational programs, and recreational activities.

Improved Wearable Technology: Wearables will evolve to be lighter and more powerful, with enhanced resolution and longer battery life. This will make AR glasses and VR headsets more practical for continuous use in various settings, from industrial applications to consumer entertainment, further mainstreaming spatial computing technologies.